Three commands to your first eval

Authenticate

$ eqho-eval auth --key ••••One API key connects you to the Eqho platform and model proxy. No per-provider keys.

Scaffold

$ eqho-eval startSelect a live campaign. Prompts, tools, and test cases are generated automatically.

Evaluate

$ eqho-eval evalRun tests across any combination of models. Results in the terminal, or eqho-eval view for the web UI.

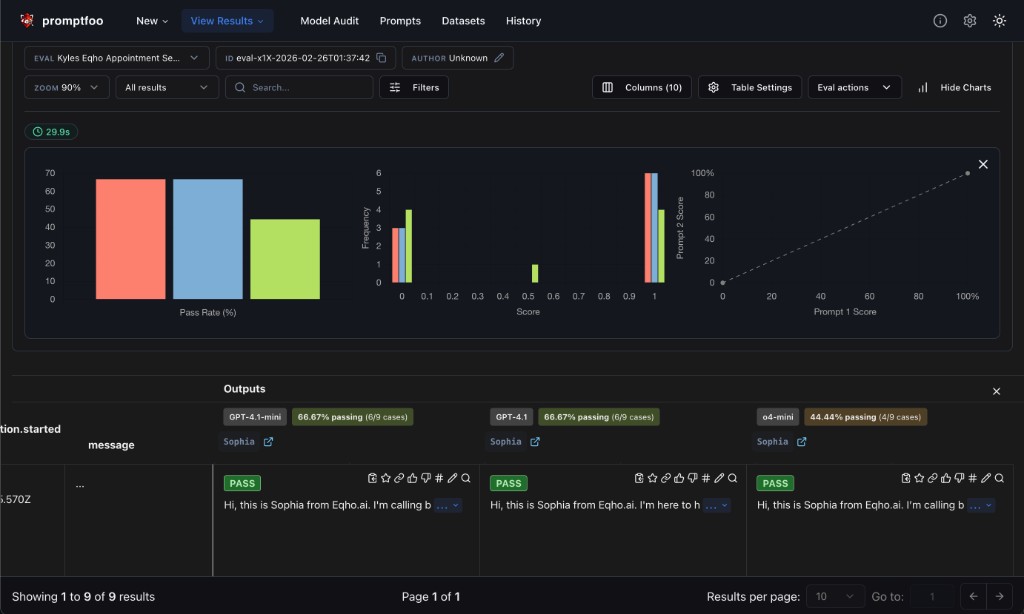

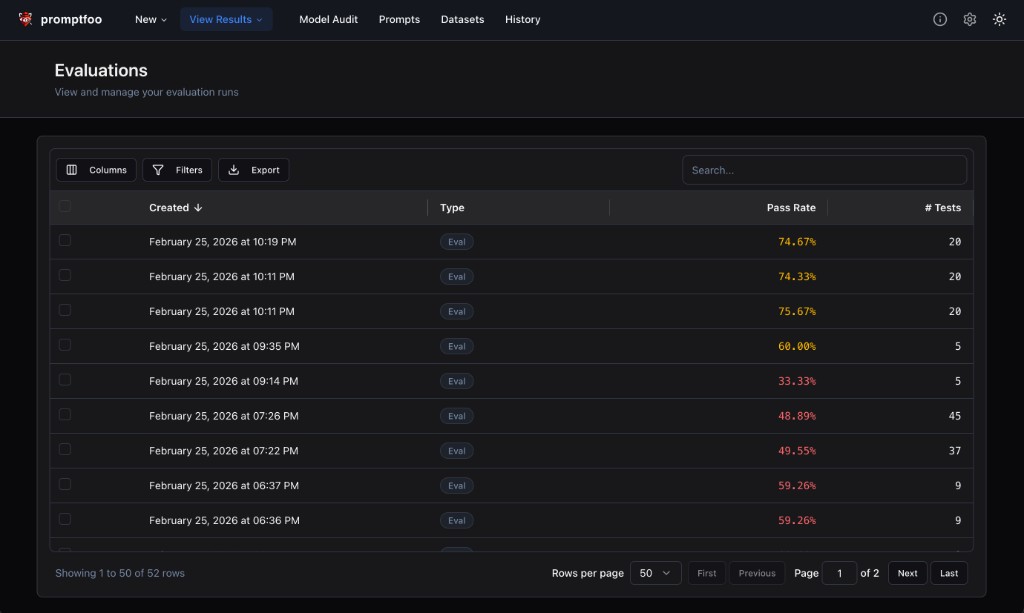

Then visualize everything in the web viewer

Run eqho-eval view to launch the promptfoo dashboard — compare models side-by-side, drill into failures, and track progress over time.

160+ models, one proxy

GPT, Claude, Gemini, Grok, DeepSeek, Llama, Mistral, Qwen, Command — all via a single OpenAI-compatible endpoint. Switch providers by changing one string.

AI-native workflows

Works seamlessly with Claude Code, Cursor, and other coding agents. Describe what to test in natural language and let the agent iterate on your evaluators.

Security & safety

Built-in test patterns for prompt injection, identity leakage, tool call accuracy, and refusal behavior. Catch vulnerabilities before they reach production.

Zero config, zero keys

No .env files, no provider API keys, no boilerplate. The backend proxy handles routing, auth, and rate limits. Just install and go.